Elasticsearch query json7/29/2023

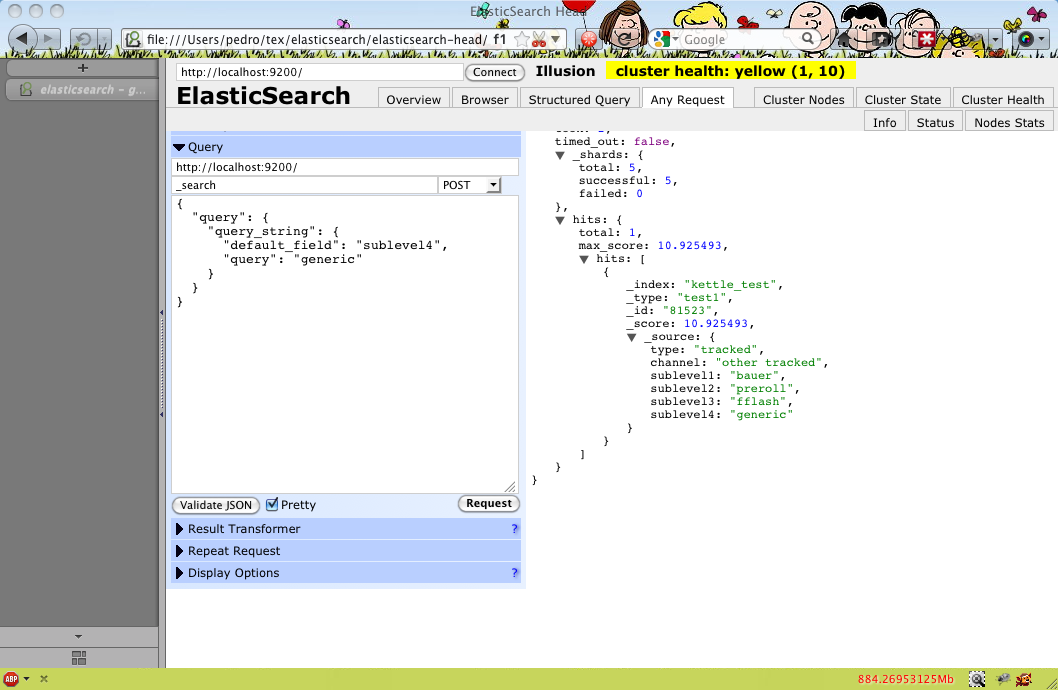

It also facilitates data ingestion, storage, analysis, enrichment, and visualization in the most comprehensive forms. It allows the analytics of textual, numerical, and even geospatial data that can be employed for any intended use. Elasticsearch Export: Using Python PandasĮlasticsearch is an open-source search and analytics engine that has a robust REST API, a distributed nature, and ample speed and scalability for use, across multiple platforms.Elasticsearch Export: Using Elasticsearch Dump.Elasticsearch Export: Using Logstash-Input-Elasticsearch Plugin.It also throws some light on the challenges of manual scripting for data export along with possible solutions to overcome them. This article deals with methods to export data from Elasticsearch and uses in tandem with platforms, along with detailed code snippets to implement the same. Elasticsearch Export: Using Python Pandas Elasticsearch Export: Using Elasticsearch Dump Elasticsearch Export: Using Logstash-Input-Elasticsearch Plugin Hevo Data: Export your Elasticsearch Data Conveniently.By following these steps, you can efficiently index, query, and aggregate JSON data in your Elasticsearch cluster. In conclusion, parsing JSON fields in Elasticsearch can be achieved using custom mappings, the Ingest Node feature, and the Elasticsearch Query DSL. To aggregate the values of the `field2` subfield, you can use the following aggregation: GET /my_index/_search For example, to search for documents with a specific value in the `field1` subfield, you can use the following query: GET /my_index/_search

Once the JSON fields are indexed, you can query and aggregate them using the Elasticsearch Query DSL. To index a document using this pipeline, use the following API call: POST /my_index/_doc?pipeline=json_parser

In this example, we create an ingest pipeline called `json_parser` that parses the JSON string stored in the `message` field and stores the resulting JSON object in a new field called `json_field`. To create an ingest pipeline with the `json` processor, use the following API call: PUT _ingest/pipeline/json_parser The Ingest Node provides a set of built-in processors, including the `json` processor, which can be used to parse JSON data. If your JSON data is stored as a string within a field, you can use the Ingest Node feature in Elasticsearch to parse the JSON string and extract the relevant fields. Using the Ingest Node to parse JSON fields The `json_field` is of type `nested`, which allows us to index and query its subfields (`field1` and `field2`) separately. In this example, we create an index called `my_index` with a custom mapping for a JSON field named `json_field`. To create an index with a custom mapping, you can use the following API call: PUT /my_index Elasticsearch can automatically detect and map JSON fields, but it is recommended to define an explicit mapping for better control over the indexing process. When ingesting JSON data into Elasticsearch, it is essential to ensure that the data is properly formatted and structured. Using the Ingest Node to parse JSON fieldsġ.In this article, we will discuss how to parse JSON fields in Elasticsearch, which is a common requirement when dealing with log data or other structured data formats. It will prevent issues automatically and perform advanced optimizations to keep your search operation running smoothly. You can also try for free our full platform: AutoOps for Elasticsearch.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed